A half-alive-half-dead cat and the beginning of Quantum Information

You may have heard of quantum information promising many prospects in revolutionizing the future of computers? When I was a high-schooler years ago, I also got amazed by these statements repeated in pop science articles. But to what extent this statement is true? Before we come to a final conclusion, let’s take a look on historical milestones of the field.

The duration from the 1900s to 1930s was the golden age of quantum mechanics with many groundbreaking findings that established a concrete theoretical foundation for quantum information. Some scientists believed that weird properties of subatomic particles might be of advantage to computation.

Although first steps to the field had been taken by 1960, there was no significant move until the 1980s. Richard Feynman proposed a model in which quantum computer can simulate the evolution of quantum systems in 1981. Throughout the following 10 years, introduced algorithms proved that quantum computers really outperforms classical computers in certain problems and are even exponentially faster in Deutch and Jozsa’s algorithm. Most notable was the algorithms of Grover and Shor. Whereas Grover proposed a quantum search algorithm with complexity reduced to the square root, Shor’s algorithm in factorizing into prime numbers made a leap in computational complexity, from exponential to polynomial time, for a practically meaningful problem. The field of quantum computation took off since then.

We’ve already had a certain number of quantum-based algorithms superior to their classical counterparts. We nevertheless hardly materialize the outstanding computational power of quantum computers mostly due to the hardware development still lagging far behind. Yes, quantum computing is still an new area full of potential, but we may not see a quantum computer good enough to replace classical computers in the next 10 years. That’s my opinion, and even well-known experts’, to the opening question.

Get back to the root, which property makes quantum computing overpowering classical computing. You must have already known that each bit carries a binary value, which is 0 or 1, corresponding to logical low or logical high respectively. That being said, to be capable of representing all binary numbers whose length is $n$, we need $2^n$ bits. What an exponential cumbersome!

On the other hand, a qubit, the quantum anology of bit, stores its signal being either 0 or 1, or both values at the same time. This means we only need $n$ qubits to carry the same information as $2^n$ bits do. However, if we measure a qubit, we only get 0 or 1, each with a probability predetermined by the qubit. At the time of measurement, the qubit collapses to the value we just got, which means the qubit is no different than a classical bit after our measurement.

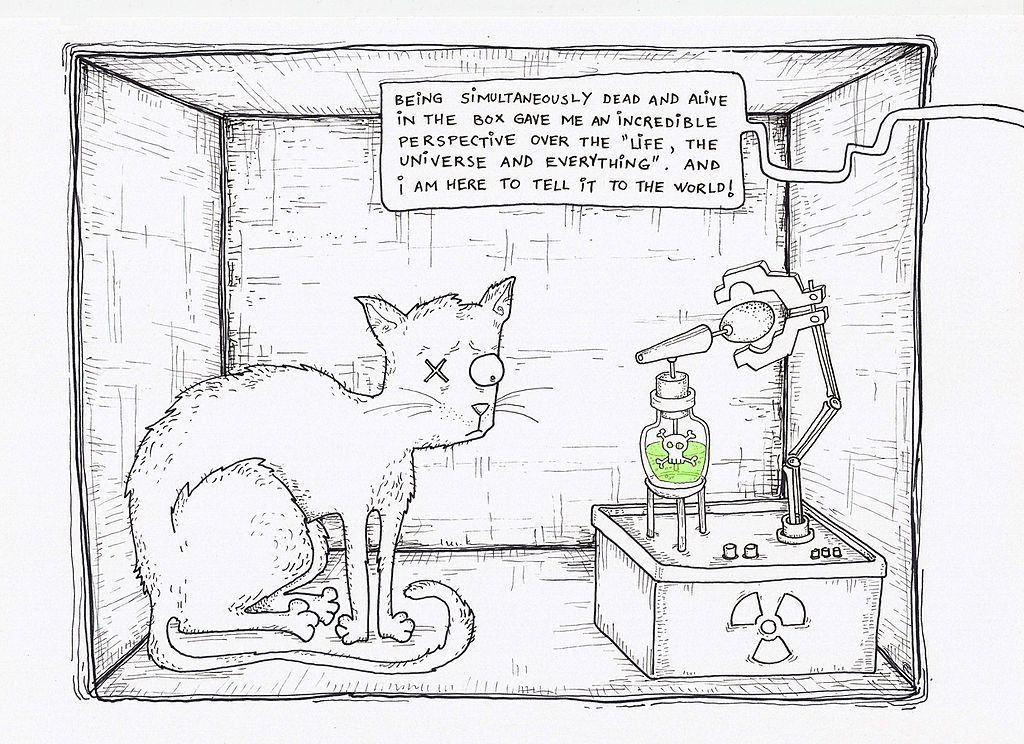

One popular paradigm for this concept is the Schrödinger’s Cat experiment: A cat is placed inside a sealed box with a Geiger counter, a flask of poison, and a piece of radioactive material. The material is so weak that it’s just able to release, in one hour, at most a single radioactive ray with 50%-probability. If the Geiger counter detects radioactivity, it will destroy the flask and release poison that immediately kills the cat.

After one hour, you might wonder whether the cat is still alive, but you don’t know until you open the box. Before you open it, the cat is in a 50-50 situation. When you open and observe inside the box, the cat must be either alive or dead.

By ADA and Neagoe, originally published in Omagiu magazine

In this experiment, the 50-50 situation of the cat before your opening is called superposition, and this is completely analogous to the qubit state I’ve mentioned. The act of opening the box updates the cat’s state into one or the other, just like the act of measuring the qubit value. A difference of qubit from the Schrödinger’s experiment is that a qubit hasn’t to be in 50-50; in fact, it can be in 70-30, 40-60. Specifically, just as classical bit, the value of qubit after measurement is either 100-0 or 0-100 (probability of being 0 and 1).

In short, qubits have the superposition ability that makes able them to store a large amount of information. Unfortunely, we never know the complete information a qubit carries. Even “destroying” the qubit only gets us a small piece of its initial data. It can be said that qubit superposition is the first and most important principle of quantum computing, but manipulating this quantum property effectively is more much than a mind-boggling game.